The LLM Integration Playbook

AI systems are failing in production at rates that would be unacceptable in any other area of software engineering. The problem isn't the technology—it's that most teams are building on fundamentally flawed assumptions about how LLMs actually work.

TL;DR

AI systems are failing in production at rates that would be unacceptable in any other area of software engineering. RAG systems return irrelevant results, agents burn through budgets without completing tasks, and 'optimized' implementations cost 10x more than necessary. The problem isn't the technology—it's that most teams are building on fundamentally flawed assumptions about how LLMs actually work.

If you built those three AI apps from the last article, you probably discovered something frustrating: getting an LLM to work in a demo is easy. Getting it to work reliably in production is a nightmare.

Your document Q&A system works great on that clean PDF you tested with. But when users start uploading scanned documents with terrible OCR, 300+ page manuals, and files in languages your embeddings model never saw during training, you're in for a bad ride.

Your code reviewer gives brilliant insights on simple functions. But it completely misses a critical SQL injection vulnerability in a complex enterprise codebase.

Welcome to the reality of LLM integration. The demo is 20% of the work. The other 80% is handling all the ways these models can fail in the real world.

This is where most AI projects die. Companies get excited about the prototype, then spend six months trying to make it production-ready, burn through their budget on API costs, and eventually give up. I've been there.

Prompt Engineering That Actually Works (Not the Fluff)

Prompt engineering isn't the silver bullet everyone claims. Most advice focuses on superficial tweaks that don't address fundamental system design problems. Here's what actually improves performance:

1. Structure Your Prompts Like APIs

Treat prompts like function calls with clear inputs and expected outputs.

Bad prompt:

"Analyze this code and tell me what's wrong with it"Good prompt:

SYSTEM_PROMPT = """

You are a senior software engineer doing code review.

INPUT: Source code in any programming language

OUTPUT: JSON object with this exact structure:

{

"bugs": ["specific bug descriptions"],

"security_issues": ["security vulnerabilities found"],

"performance_issues": ["performance problems"],

"suggestions": ["improvement recommendations"],

"severity": "LOW|MEDIUM|HIGH"

}

RULES:

- Only report actual issues, not style preferences

- Include line numbers when possible

- Be specific about why something is a problem

- If no issues found, return empty arrays

"""

def create_code_review_prompt(code: str, language: str) -> list:

return [

{"role": "system", "content": SYSTEM_PROMPT},

{"role": "user", "content": f"Language: {language}\n\nCode:\n```{language}\n{code}\n```"}

]2. Use Examples to Lock Down Behavior

One good example is worth a thousand words of explanation.

FEW_SHOT_PROMPT = """

Extract key information from customer support tickets.

Example 1:

Input: "My order #12345 never arrived and I need it by Friday for my presentation. This is urgent!"

Output: {"order_id": "12345", "issue": "missing_delivery", "urgency": "high", "deadline": "Friday"}

Example 2:

Input: "The app keeps crashing when I try to upload photos. It's happened 3 times today."

Output: {"order_id": null, "issue": "app_crash", "urgency": "medium", "frequency": "3 times today"}

Now extract information from this ticket:

{ticket_text}

"""3. Chain Prompts for Complex Tasks

Don't try to do everything in one prompt. Break complex tasks into steps.

class DocumentAnalyzer:

def __init__(self, llm_client):

self.llm = llm_client

async def analyze_contract(self, contract_text: str) -> Dict:

# Step 1: Extract key entities

entities = await self._extract_entities(contract_text)

# Step 2: Identify potential risks

risks = await self._identify_risks(contract_text, entities)

# Step 3: Generate summary

summary = await self._generate_summary(contract_text, entities, risks)

return {

"entities": entities,

"risks": risks,

"summary": summary

}

async def _extract_entities(self, text: str) -> Dict:

prompt = f"""

Extract these entities from the contract:

- parties (companies/people involved)

- dates (start, end, deadlines)

- monetary_amounts (payments, penalties)

- obligations (what each party must do)

Contract: {text}

Return as JSON.

"""

# Implementation details...

async def _identify_risks(self, text: str, entities: Dict) -> List:

prompt = f"""

Given this contract and extracted entities, identify potential legal/business risks:

Entities: {entities}

Contract: {text}

Focus on:

- Unclear obligations

- Missing termination clauses

- Unfavorable payment terms

- Liability issues

Return as JSON array.

"""

# Implementation details...RAG Systems, Function Calling, Agents - What Actually Works

RAG systems are everywhere, but most fail to deliver reliable results in production. The problem isn't just poor implementation—it's a fundamental misunderstanding of how vector embeddings actually work. Here's what teams consistently get wrong:

Failure Mode #1: Treating Vector Search Like Google

Most developers think: "I'll embed my documents, embed my query, find the closest matches—boom, RAG!"

This is naive. Vector similarity finds topically related content, not contextually relevant answers. Your query about "database performance issues" might return chunks about "database security" because they share vocabulary, while missing the chunk that actually explains your specific performance bottleneck.

Failure Mode #2: Garbage In, Garbage Out Chunking

The typical approach: text.split('\n\n') or fixed 500-character chunks that slice sentences in half destroys semantic coherence.

When you embed a chunk containing three unrelated paragraphs—say, API documentation, error handling, and deployment notes—the resulting vector is semantic soup. It's equally distant from queries about any of those topics, making retrieval essentially random.

Failure Mode #3: Context Window Ignorance

Most systems grab the "top 5" chunks and dump them into the LLM, regardless of:

- Total token count (hello, truncated context)

- Chunk overlap (redundant information eating tokens)

- Relevance distribution (chunk #5 might be completely irrelevant)

This wastes precious context window real estate and confuses the LLM with contradictory or tangential information.

Failure Mode #4: No Understanding of Embedding Limitations

Vector embeddings are terrible at:

- Understanding negation ("not recommended" vs "recommended")

- Handling rare or domain-specific terminology

- Distinguishing between concepts that share vocabulary but have different meanings

Yet most RAG systems treat them as infallible databases.

What Actually Works

Real production RAG requires addressing each of these failure modes systematically:

import chromadb

from sentence_transformers import SentenceTransformer

import asyncio

from typing import List, Dict

class ProductionRAG:

def __init__(self, collection_name: str):

self.client = chromadb.PersistentClient()

self.collection = self.client.get_or_create_collection(collection_name)

self.encoder = SentenceTransformer('all-MiniLM-L6-v2')

def chunk_document(self, text: str, chunk_size: int = 1000, overlap: int = 200) -> List[str]:

"""Smart chunking that maintains semantic coherence

Why sentence boundaries matter: Embedding models create vector

representations based on semantic relationships. A chunk mixing

unrelated concepts produces a weak, confused vector that matches

poorly with specific queries.

Why overlap matters: Context at chunk boundaries often contains

critical relationships. Without overlap, you lose the semantic

bridges between concepts.

"""

sentences = text.split('. ')

chunks = []

current_chunk = ""

for sentence in sentences:

if len(current_chunk + sentence) > chunk_size and current_chunk:

chunks.append(current_chunk.strip())

# Preserve semantic continuity with overlap

overlap_sentences = current_chunk.split('. ')[-overlap//100:]

current_chunk = '. '.join(overlap_sentences) + '. '

current_chunk += sentence + '. '

if current_chunk:

chunks.append(current_chunk.strip())

return chunks

async def search(self, query: str, n_results: int = 5) -> List[Dict]:

"""Search with re-ranking to fix vector similarity limitations

Why re-ranking matters: Initial vector search finds topically

similar content, but LLMs need contextually relevant content.

Re-ranking bridges this gap by considering query intent, not

just vocabulary overlap.

"""

query_embedding = self.encoder.encode([query])[0].tolist()

# Cast a wide net initially - vector search is imprecise

results = self.collection.query(

query_embeddings=[query_embedding],

n_results=n_results * 2 # Get more for better re-ranking

)

# Re-rank based on actual relevance

scored_results = []

for i, doc in enumerate(results['documents'][0]):

score = results['distances'][0][i]

scored_results.append({

'text': doc,

'metadata': results['metadatas'][0][i],

'score': score

})

scored_results.sort(key=lambda x: x['score'])

return scored_results[:n_results]

async def answer_question(self, question: str, llm_client) -> str:

"""RAG pipeline with intelligent context management

Why context management matters: LLMs perform worse with

irrelevant context than with no context at all. Better to

include fewer, highly relevant chunks than to fill the

context window with noise.

"""

relevant_chunks = await self.search(question, n_results=5)

# Smart context window usage - quality over quantity

context = ""

for chunk in relevant_chunks:

new_context = context + f"\n\nSource: {chunk['text']}"

if len(new_context) > 6000: # Preserve room for reasoning

break

context = new_context

prompt = f"""

Answer based on the provided context. If the context doesn't

contain sufficient information, explicitly state what's missing.

Context:

{context}

Question: {question}

Answer:

"""

return await llm_client.chat_completion([

{"role": "user", "content": prompt}

])The difference between broken RAG and working RAG isn't in the libraries you use—it's understanding that vector similarity is a crude approximation of relevance, and building systems that compensate for its limitations rather than blindly trusting it.

Function Calling: When to Use It vs. When to Avoid It

Function calling is powerful but massively overused. Most developers reach for it when they should be using simpler approaches.

Why Function Calling Is Overengineered for Most Use Cases

Function calling adds complexity, latency, and failure modes. Yet developers treat it as the default solution for any task that needs "structured output." This is backwards thinking.

The Real Problem: Developers Don't Trust Prompts

Most function calling implementations exist because developers assume LLMs can't follow instructions reliably. So instead of writing better prompts, they build elaborate function schemas to "force" structured behavior.

This is like using a sledgehammer to hang a picture frame.

When Function Calling Actually Makes Sense

Function calling shines in three specific scenarios:

1. Dynamic API Integration When you need to call different APIs based on user intent, and the parameters are genuinely unpredictable:

# User: "Book a flight to Paris next Tuesday and reserve a hotel near the Louvre"

# LLM needs to decide: flight_booking_api vs hotel_booking_api

# Parameters vary wildly: dates, locations, preferences2. Multi-Step Workflows with Branching Logic When the LLM needs to decide the sequence of operations based on context:

# User: "Analyze my sales data and send a report to my team"

# Could be: fetch_data() → analyze() → generate_report() → send_email()

# Or: fetch_data() → detect_insufficient_data() → request_more_info()3. Database Queries with Complex Filtering When you need dynamic query construction that pure SQL injection would make dangerous:

# User: "Show me customers who bought Product X but not Product Y in the last quarter"

# Safe parameter extraction: products=["X"], excluded=["Y"], timeframe="Q4"When Function Calling Is Overkill

Bad Use Case #1: Simple Data Extraction

Instead of this complexity:

@function_call

def extract_contact_info(name: str, email: str, phone: str):

return {"name": name, "email": email, "phone": phone}Just use a clear prompt:

prompt = """

Extract contact information from this text and return as JSON:

{"name": "...", "email": "...", "phone": "..."}

Text: {user_input}

JSON:

"""Bad Use Case #2: When You Need Reliability

Function calling adds failure modes:

- JSON parsing errors in function arguments

- Schema validation failures

- Function execution exceptions

- Multiple round-trips increase latency

For critical operations, direct prompting with output validation is more reliable.

Bad Use Case #3: Simple Classification Tasks

Don't build a function for:

@function_call

def classify_sentiment(text: str, sentiment: str): # sentiment: "positive"|"negative"|"neutral"When this works better:

prompt = f"Classify sentiment as positive/negative/neutral: {text}\nSentiment:"What Actually Works: Smart Function Call Management

When you do need function calling, most implementations are fragile and overcomplicated. Here's a production-ready approach:

import json

from typing import Dict, Any, Callable, Optional

import asyncio

from dataclasses import dataclass

@dataclass

class FunctionResult:

success: bool

data: Any

error: Optional[str] = None

class FunctionCallManager:

def __init__(self, llm_client):

self.llm = llm_client

self.functions = {}

self.max_retries = 3

def register_function(self, name: str, description: str, parameters: Dict):

"""Register a function with proper error handling"""

def decorator(func: Callable):

self.functions[name] = {

"function": func,

"description": description,

"parameters": parameters

}

return func

return decorator

async def _execute_function_safely(self, name: str, args: Dict) -> FunctionResult:

"""Execute function with comprehensive error handling

Why this matters: LLMs generate imperfect function arguments.

Your system needs to handle malformed JSON, missing parameters,

type mismatches, and runtime exceptions gracefully.

"""

if name not in self.functions:

return FunctionResult(False, None, f"Function '{name}' not found")

try:

result = await self.functions[name]["function"](**args)

return FunctionResult(True, result)

except TypeError as e:

return FunctionResult(False, None, f"Parameter error: {str(e)}")

except Exception as e:

return FunctionResult(False, None, f"Execution error: {str(e)}")

async def execute_with_functions(self, prompt: str, max_iterations: int = 5) -> str:

"""Execute with function calling and retry logic

Why iteration limits matter: LLMs can get stuck in function-calling

loops, especially when functions return errors. Always bound your

execution to prevent runaway costs and infinite loops.

"""

function_definitions = []

for name, details in self.functions.items():

function_definitions.append({

"name": name,

"description": details["description"],

"parameters": details["parameters"]

})

messages = [{"role": "user", "content": prompt}]

iterations = 0

while iterations < max_iterations:

iterations += 1

response = await self.llm.chat_completion(

messages=messages,

functions=function_definitions,

function_call="auto"

)

# No function call - we're done

if not response.function_call:

return response.content

# Parse and execute function call

function_name = response.function_call.name

try:

function_args = json.loads(response.function_call.arguments)

except json.JSONDecodeError:

# LLM generated invalid JSON - retry with error feedback

messages.append({

"role": "assistant",

"content": f"Error: Invalid JSON in function arguments. Please retry."

})

continue

# Execute function safely

result = await self._execute_function_safely(function_name, function_args)

if result.success:

messages.append({

"role": "function",

"name": function_name,

"content": json.dumps(result.data) if result.data else "Success"

})

else:

messages.append({

"role": "function",

"name": function_name,

"content": f"Error: {result.error}"

})

return "Max iterations reached. The task could not be completed."

# Example: When function calling actually makes sense

manager = FunctionCallManager(llm_client)

@manager.register_function(

name="search_database",

description="Search products with complex filtering. Use when user needs specific product combinations or constraints.",

parameters={

"type": "object",

"properties": {

"category": {"type": "string", "description": "Product category"},

"max_price": {"type": "number", "description": "Maximum price filter"},

"in_stock": {"type": "boolean", "description": "Only show in-stock items"},

"tags": {"type": "array", "items": {"type": "string"}, "description": "Required product tags"}

},

"required": ["category"]

}

)

async def search_database(category: str, max_price: float = None, in_stock: bool = True, tags: list = None):

"""Actual database search with complex logic"""

# This genuinely needs function calling because:

# 1. Dynamic parameter combinations

# 2. SQL injection prevention

# 3. Complex business logic for filtering

query = f"SELECT * FROM products WHERE category = %s"

params = [category]

if max_price:

query += " AND price <= %s"

params.append(max_price)

if in_stock:

query += " AND stock > 0"

if tags:

query += " AND tags && %s" # PostgreSQL array overlap

params.append(tags)

# Execute safe parameterized query

return await db.execute(query, params)The Bottom Line

Function calling is a precision tool, not a general solution. Use it when you genuinely need dynamic behavior that prompts can't handle reliably. For everything else, write better prompts.

The best function calling systems are the ones you don't need to build.

AI Agents: The 90% Failure Rate Reality

AI agents are the hot new thing. They're also the fastest way to burn money and ship unreliable systems.

The Agent Hype vs. Reality

Every company is building "AI agents" now. Most are shipping chatbots with extra steps and calling them autonomous systems. This disconnect between marketing and reality is burning through budgets and destroying user trust.

What Everyone Thinks an Agent Is

The marketing promise: "Give our agent a high-level goal, and it'll autonomously plan, execute, and adapt until the job is done."

The reality: Most "agents" are deterministic workflows with LLM reasoning sprinkled at decision points. They're not autonomous—they're just more complex prompt chains.

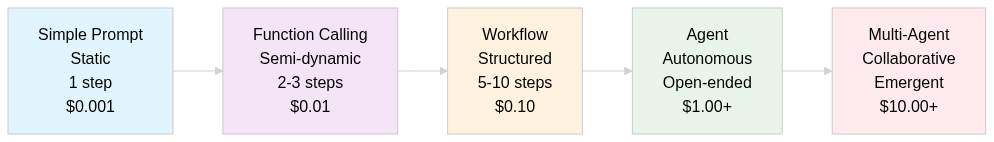

The Spectrum of AI System Complexity

Understanding where your system actually falls on this spectrum prevents over-engineering and sets realistic expectations:

Simple Prompt: Single interaction, no memory

- "Summarize this document"

- Success rate: 95%

- Cost: Minimal

Function Calling: Structured interactions with external systems

- "Book a restaurant based on my preferences"

- Success rate: 80%

- Cost: Low

Workflow: Multi-step process with defined decision points

- "Research competitors and create a comparison report"

- Success rate: 60%

- Cost: Medium

Agent: Autonomous planning and execution toward goals

- "Launch a marketing campaign for our new product"

- Success rate: 30%

- Cost: High

Multi-Agent: Multiple autonomous agents collaborating

- "Optimize our entire sales funnel using data from all departments"

- Success rate: 10%

- Cost: Extremely high

Why Most Agent Projects Fail

Failure Mode #1: Complexity Without Purpose

Teams build agents because they can, not because they should. The question isn't "Can we make this autonomous?" but "Should this be autonomous?".

Most business processes benefit from human oversight, not elimination. Building an agent to "autonomously manage our social media" sounds cool until it posts something that tanks your brand.

Failure Mode #2: Ignoring the Error Propagation Problem

In a 10-step workflow:

- If each step has 90% reliability

- Overall success rate: P(10 steps) = 0.9 × ... × 0.9 (10 times) = 0.9^10 = 35%

Errors in sequential steps aren't independent - a mistake in step 3 will cascade through all subsequent steps, so the success rate is actually worse.

Agents don't magically solve this. They make it worse by adding:

- Planning failures (agent chooses wrong approach)

- Tool integration failures (API timeouts, rate limits)

- Context drift (agent forgets original goal)

- Hallucination amplification (small errors compound)

Failure Mode #3: Underestimating Monitoring and Control

The more autonomous your system, the more sophisticated your monitoring needs to be. Most teams build the agent first and realize afterward they have no visibility into what it's actually doing.

You need:

- Real-time decision logging

- Intervention mechanisms

- Rollback capabilities

- Cost controls

- Performance metrics

This infrastructure often costs more than the agent itself.

What Actually Works: Constrained Autonomy

Successful agent systems in production share common characteristics: they're narrowly focused, heavily monitored, and designed to fail safely.

The Bounded Agent Pattern

Instead of building general-purpose agents, build agents that excel at specific, well-defined tasks with clear success criteria:

import json

import asyncio

from typing import Dict, List, Optional

from enum import Enum

from dataclasses import dataclass

from datetime import datetime

class TaskStatus(Enum):

PLANNING = "planning"

EXECUTING = "executing"

COMPLETED = "completed"

FAILED = "failed"

REQUIRES_HUMAN = "requires_human"

@dataclass

class TaskStep:

id: str

description: str

status: TaskStatus

result: Optional[Dict] = None

error: Optional[str] = None

timestamp: Optional[datetime] = None

class BoundedAgent:

"""

An agent that operates within strict constraints and provides full observability.

Why bounded? Unlimited autonomy leads to:

- Unpredictable costs

- Uncontrolled behavior

- Difficult debugging

- Regulatory compliance issues

This pattern provides autonomy where it adds value while maintaining control.

"""

def __init__(self, llm_client, max_steps: int = 10, max_cost: float = 5.0):

self.llm = llm_client

self.max_steps = max_steps

self.max_cost = max_cost # Dollar limit

self.current_cost = 0.0

self.steps_executed = []

self.tools = {}

def add_tool(self, name: str, func, cost_per_call: float = 0.01):

"""Register tools with cost tracking"""

self.tools[name] = {

"function": func,

"cost": cost_per_call

}

async def execute_task(self, goal: str, constraints: Dict = None) -> Dict:

"""

Execute a task with built-in safeguards and full traceability.

Why constraints matter: Without boundaries, agents become

unpredictable and expensive. Constraints provide:

- Cost control

- Behavior boundaries

- Clear success/failure criteria

- Audit trails for compliance

"""

constraints = constraints or {}

# Create execution plan

plan = await self._create_plan(goal, constraints)

if not plan:

return self._create_failure_result("Failed to create execution plan")

# Execute plan with monitoring

status = TaskStatus.PLANNING

for step_idx, planned_step in enumerate(plan["steps"]):

if self.steps_executed >= self.max_steps:

return self._create_failure_result("Max steps exceeded")

if self.current_cost >= self.max_cost:

return self._create_failure_result("Cost limit exceeded")

step = TaskStep(

id=f"step_{step_idx}",

description=planned_step["description"],

status=TaskStatus.EXECUTING,

timestamp=datetime.now()

)

try:

# Execute step with timeout

result = await asyncio.wait_for(

self._execute_step(planned_step),

timeout=30.0

)

step.result = result

step.status = TaskStatus.COMPLETED

# Check if we've achieved the goal

if await self._goal_achieved(goal, self.steps_executed):

status = TaskStatus.COMPLETED

break

except asyncio.TimeoutError:

step.error = "Step execution timeout"

step.status = TaskStatus.FAILED

status = TaskStatus.REQUIRES_HUMAN

break

except Exception as e:

step.error = str(e)

step.status = TaskStatus.FAILED

# Decide whether to continue or escalate

if await self._should_escalate(step.error):

status = TaskStatus.REQUIRES_HUMAN

break

finally:

self.steps_executed.append(step)

return {

"goal": goal,

"status": status.value,

"steps_executed": len(self.steps_executed),

"total_cost": self.current_cost,

"execution_log": [vars(step) for step in self.steps_executed],

"requires_human": status == TaskStatus.REQUIRES_HUMAN

}

async def _create_plan(self, goal: str, constraints: Dict) -> Optional[Dict]:

"""Create execution plan with explicit constraints"""

prompt = f"""

Create a detailed execution plan for: {goal}

Constraints:

{json.dumps(constraints, indent=2)}

Available tools: {list(self.tools.keys())}

Return a JSON plan with:

- "steps": Array of specific actions (max {self.max_steps})

- "success_criteria": How to determine if goal is achieved

- "risk_assessment": Potential failure points

Plan:

"""

try:

response = await self.llm.chat_completion([

{"role": "user", "content": prompt}

])

self.current_cost += 0.01 # Estimate LLM cost

return json.loads(response)

except Exception:

return None

async def _execute_step(self, planned_step: Dict) -> Dict:

"""Execute a single step with error handling"""

tool_name = planned_step.get("tool")

if tool_name not in self.tools:

raise ValueError(f"Unknown tool: {tool_name}")

# Track tool cost

self.current_cost += self.tools[tool_name]["cost"]

# Execute tool

result = await self.tools[tool_name]["function"](

**planned_step.get("parameters", {})

)

return {"tool": tool_name, "result": result}

async def _goal_achieved(self, goal: str, execution_history: List) -> bool:

"""Determine if the goal has been sufficiently achieved"""

prompt = f"""

Goal: {goal}

Execution history:

{json.dumps([vars(step) for step in execution_history[-3:]], indent=2)}

Has the goal been achieved? Respond with only "yes" or "no".

"""

response = await self.llm.chat_completion([

{"role": "user", "content": prompt}

])

self.current_cost += 0.005

return response.strip().lower() == "yes"

async def _should_escalate(self, error: str) -> bool:

"""Determine if error requires human intervention"""

# Simple heuristics - in production, use more sophisticated logic

escalation_triggers = [

"permission denied",

"authentication failed",

"payment required",

"legal review needed"

]

return any(trigger in error.lower() for trigger in escalation_triggers)

def _create_failure_result(self, reason: str) -> Dict:

return {

"status": "failed",

"reason": reason,

"steps_executed": len(self.steps_executed),

"total_cost": self.current_cost,

"execution_log": [vars(step) for step in self.steps_executed]

}

# Example: Research agent with clear boundaries

async def create_research_agent():

agent = BoundedAgent(llm_client, max_steps=5, max_cost=2.0)

# Add specific, reliable tools

agent.add_tool("web_search", web_search_tool, cost_per_call=0.10)

agent.add_tool("summarize", summarization_tool, cost_per_call=0.05)

agent.add_tool("save_research", save_to_database, cost_per_call=0.01)

return agent

# Usage with explicit constraints

result = await agent.execute_task(

goal="Research sustainable packaging trends in 2024",

constraints={

"max_sources": 5,

"focus_regions": ["North America", "Europe"],

"must_include": ["cost analysis", "environmental impact"],

"exclude_topics": ["political content", "unverified claims"]

}

)Cost Optimization: Where Money Actually Gets Wasted

LLM costs spiral out of control not because of high API prices, but because developers don't understand where the money actually goes.

The Hidden Cost Multipliers Nobody Talks About

Most teams focus on per-token pricing while ignoring the real cost drivers that can make identical systems 10x more expensive.

Cost Killer #1: Context Window Waste

The Problem: Developers stuff everything into context "just in case," not realizing they're paying for tokens the LLM never needed.

A typical RAG query:

- Useful context: 500 tokens

- Irrelevant context: 3,000 tokens

- You pay for all 3,500 tokens, every request

At 10,000 requests/day with GPT-4, that's $1,050/month in wasted tokens alone.

Cost Killer #2: Retry Hell

The Problem: Systems retry failed requests without understanding why they failed, multiplying costs exponentially.

python

# What most people do (cost disaster)

for attempt in range(5): # Up to 5x cost multiplier

try:

return await llm.chat_completion(messages)

except Exception:

continue # Retry identical requestThe Reality: If your prompt failed once due to unclear instructions, it'll fail 5 times. You just paid 5x for the same garbage output.

Cost Killer #3: The Temperature Tax

The Problem: Using temperature > 0 when you need deterministic outputs.

python

# Expensive and inconsistent

response = llm.chat_completion(

messages=messages,

temperature=0.7 # Why are you paying for randomness you don't want?

)For most business use cases—data extraction, classification, structured output—you want temperature=0. Higher temperatures cost the same but give inconsistent results, forcing you to retry or post-process outputs.

Production Cost Optimization

Token Management That Matters

Most token counting is performative. Developers obsess over prompt length while ignoring the real cost killers: redundant requests, bloated context windows, and retry loops that multiply costs 5x. Here's what actually reduces costs:

import tiktoken

from typing import List, Dict

import re

class ProductionTokenOptimizer:

def __init__(self, model_name: str = "gpt-4"):

self.encoding = tiktoken.encoding_for_model(model_name)

self.token_costs = {

"gpt-4": {"input": 0.03/1000, "output": 0.06/1000},

"gpt-3.5-turbo": {"input": 0.001/1000, "output": 0.002/1000}

}

def estimate_cost(self, prompt: str, expected_output_tokens: int, model: str) -> float:

"""Calculate actual cost before making request

Why this matters: Most teams discover their costs are unsustainable

AFTER deployment. Cost estimation during development prevents

expensive surprises in production.

"""

input_tokens = len(self.encoding.encode(prompt))

costs = self.token_costs[model]

input_cost = input_tokens * costs["input"]

output_cost = expected_output_tokens * costs["output"]

return input_cost + output_cost

def compress_context_intelligently(self, context: str, max_tokens: int) -> str:

"""Compress context while preserving information density

Why naive truncation fails: Cutting off context at arbitrary

points often removes the exact information needed to answer

the query. This approach prioritizes information density.

"""

paragraphs = context.split('\n\n')

compressed = []

current_tokens = 0

# Sort by information density (simple heuristic)

scored_paragraphs = []

for p in paragraphs:

tokens = len(self.encoding.encode(p))

# Prioritize paragraphs with numbers, proper nouns, specific terms

density_score = (

len(re.findall(r'\d+', p)) * 2 + # Numbers

len(re.findall(r'[A-Z][a-z]+', p)) + # Proper nouns

len(re.findall(r'[a-z]{8,}', p)) # Complex words

) / max(tokens, 1)

scored_paragraphs.append((density_score, p, tokens))

# Include highest density paragraphs first

for score, paragraph, tokens in sorted(scored_paragraphs, reverse=True):

if current_tokens + tokens <= max_tokens:

compressed.append(paragraph)

current_tokens += tokens

else:

# Partial inclusion for the last paragraph

remaining = max_tokens - current_tokens

if remaining > 50: # Only if meaningful space left

truncated = self.truncate_to_tokens(paragraph, remaining)

compressed.append(truncated)

break

return '\n\n'.join(compressed)

def truncate_to_tokens(self, text: str, max_tokens: int) -> str:

"""Truncate precisely to token count"""

tokens = self.encoding.encode(text)

if len(tokens) <= max_tokens:

return text

return self.encoding.decode(tokens[:max_tokens])Caching: The Only Optimization That Matters

The brutal truth: If you're not caching LLM responses, you're probably wasting 40-60% of your budget.

Most applications have massive request overlap that developers don't notice:

import hashlib

import redis

import json

from typing import Optional, Dict

import time

class IntelligentLLMCache:

def __init__(self, redis_client: redis.Redis):

self.redis = redis_client

self.hit_rate = 0.0

self.total_requests = 0

self.cache_hits = 0

def _normalize_prompt(self, messages: List[Dict], model: str, temperature: float) -> str:

"""Create consistent cache keys

Why normalization matters: Minor variations in whitespace,

formatting, or parameter order can prevent cache hits.

Normalization captures semantic equivalence.

"""

# Normalize messages

normalized = []

for msg in messages:

normalized_msg = {

"role": msg["role"],

"content": msg["content"].strip()

}

normalized.append(normalized_msg)

# Create deterministic key

cache_data = {

"messages": normalized,

"model": model,

"temperature": temperature

}

key_string = json.dumps(cache_data, sort_keys=True)

return hashlib.md5(key_string.encode()).hexdigest()

async def get_cached_response(self, messages: List[Dict], model: str,

temperature: float) -> Optional[Dict]:

"""Get cached response with hit rate tracking"""

key = f"llm_cache:{self._normalize_prompt(messages, model, temperature)}"

self.total_requests += 1

cached = self.redis.get(key)

if cached:

self.cache_hits += 1

self.hit_rate = self.cache_hits / self.total_requests

cached_data = json.loads(cached)

return {

"response": cached_data["response"],

"cached": True,

"cache_age": time.time() - cached_data["timestamp"]

}

return None

async def cache_response(self, messages: List[Dict], model: str,

temperature: float, response: str, ttl: int = 86400):

"""Cache response with intelligent TTL

Why TTL matters: Stale responses for time-sensitive queries

can be worse than no cache at all. Different query types

need different cache durations.

"""

key = f"llm_cache:{self._normalize_prompt(messages, model, temperature)}"

# Detect time-sensitive queries (simple heuristic)

query_text = " ".join([msg["content"] for msg in messages]).lower()

time_sensitive_terms = ["today", "now", "current", "latest", "recent"]

if any(term in query_text for term in time_sensitive_terms):

ttl = 3600 # 1 hour for time-sensitive queries

cache_data = {

"response": response,

"timestamp": time.time(),

"model": model,

"temperature": temperature

}

self.redis.setex(key, ttl, json.dumps(cache_data))

def get_cache_stats(self) -> Dict:

"""Monitor cache performance for cost optimization"""

return {

"hit_rate": self.hit_rate,

"total_requests": self.total_requests,

"estimated_cost_savings": self.cache_hits * 0.03 # Rough estimate

}

class CostOptimizedLLMClient:

def __init__(self, llm_client, cache: IntelligentLLMCache):

self.llm = llm_client

self.cache = cache

self.total_cost = 0.0

async def chat_completion(self, messages: List[Dict], model: str = "gpt-4",

temperature: float = 0.0, **kwargs) -> Dict:

"""LLM client with cost tracking and optimization"""

# Check cache first

cached = await self.cache.get_cached_response(messages, model, temperature)

if cached:

return cached

# Make LLM request

response = await self.llm.chat_completion(

messages=messages,

model=model,

temperature=temperature,

**kwargs

)

# Track costs (simplified)

input_tokens = sum(len(msg["content"]) for msg in messages) // 4 # Rough estimate

output_tokens = len(response) // 4

if model == "gpt-4":

cost = input_tokens * 0.00003 + output_tokens * 0.00006

else: # gpt-3.5-turbo

cost = input_tokens * 0.000001 + output_tokens * 0.000002

self.total_cost += cost

# Cache response

await self.cache.cache_response(messages, model, temperature, response)

return {

"response": response,

"cached": False,

"cost": cost,

"total_session_cost": self.total_cost

}The Real Cost Optimization Strategy

Stop optimizing tokens. Start optimizing requests.

- Cache everything - 40-60% cost reduction immediately

- Use temperature=0 for deterministic tasks

- Compress context intelligently - not just truncation

- Implement cost limits - prevent runaway spending

- Monitor hit rates - cache optimization beats token optimization

The companies with sustainable LLM costs aren't using cheaper models or clever prompts. They're making fewer requests for the same functionality.

Every request you don't make costs $0.

The Bottom Line: Building AI Systems That Actually Work

The difference between successful AI implementations and expensive failures isn't about using the latest models or clever prompts. It's about engineering discipline and understanding the real constraints of production systems.

What Success Actually Looks Like

Cost Predictability: Systems that operate within defined budgets, not ones that generate surprise bills. Well-architected AI implementations typically reduce operational costs by 40-60% compared to naive approaches.

Reliability at Scale: User-facing systems that maintain 95%+ uptime and consistent response quality, even under load. This means designing for graceful degradation, not just happy-path scenarios.

Development Velocity: Teams that can iterate and deploy new features without rebuilding core infrastructure. Proper abstractions and monitoring reduce feature development time from weeks to days.

Compliance and Auditability: Systems that can explain their decisions and maintain audit trails. This isn't just nice-to-have—it's necessary for regulated industries and enterprise customers.

User Adoption: AI features that users actually use and depend on, not ones that get disabled after the demo. This requires understanding user workflows, not just technical capabilities.

The Real Competitive Advantage

Companies winning with AI aren't using secret models or revolutionary techniques. They're applying software engineering fundamentals to LLM-based systems:

- Bounded complexity instead of unlimited autonomy

- Intelligent caching instead of brute-force requests

- Graceful error handling instead of retry loops

- Cost controls built into the architecture

- Observable systems that surface problems before they impact users

Implementation Strategy

Start with constrained, high-value use cases where failure modes are understood and manageable. Build monitoring and cost controls from day one. Focus on reliability over features.

Most importantly: treat AI components like any other external service dependency. They will fail, they will be expensive, and they will behave unexpectedly. Design your systems accordingly.

The AI revolution isn't about replacing human judgment with artificial intelligence. It's about augmenting human capabilities with reliable, cost-effective automation that actually works in production.

The Reality Check

Most companies fail at LLM integration because they treat it like a science project instead of an engineering problem. They optimize for demo wow-factor instead of production reliability.

The companies that succeed focus on the boring stuff: error handling, cost management, caching, monitoring, and having backup plans when everything goes wrong.

That's what we'll cover next: how to actually deploy and maintain these systems in production.

Coming up next: "AI Engineering Infrastructure Reality Check" - Where we dive into Docker, deployments, and all the ways your beautiful AI system will break in production.

Now go build something that can handle real users. 🚀

Comments ()